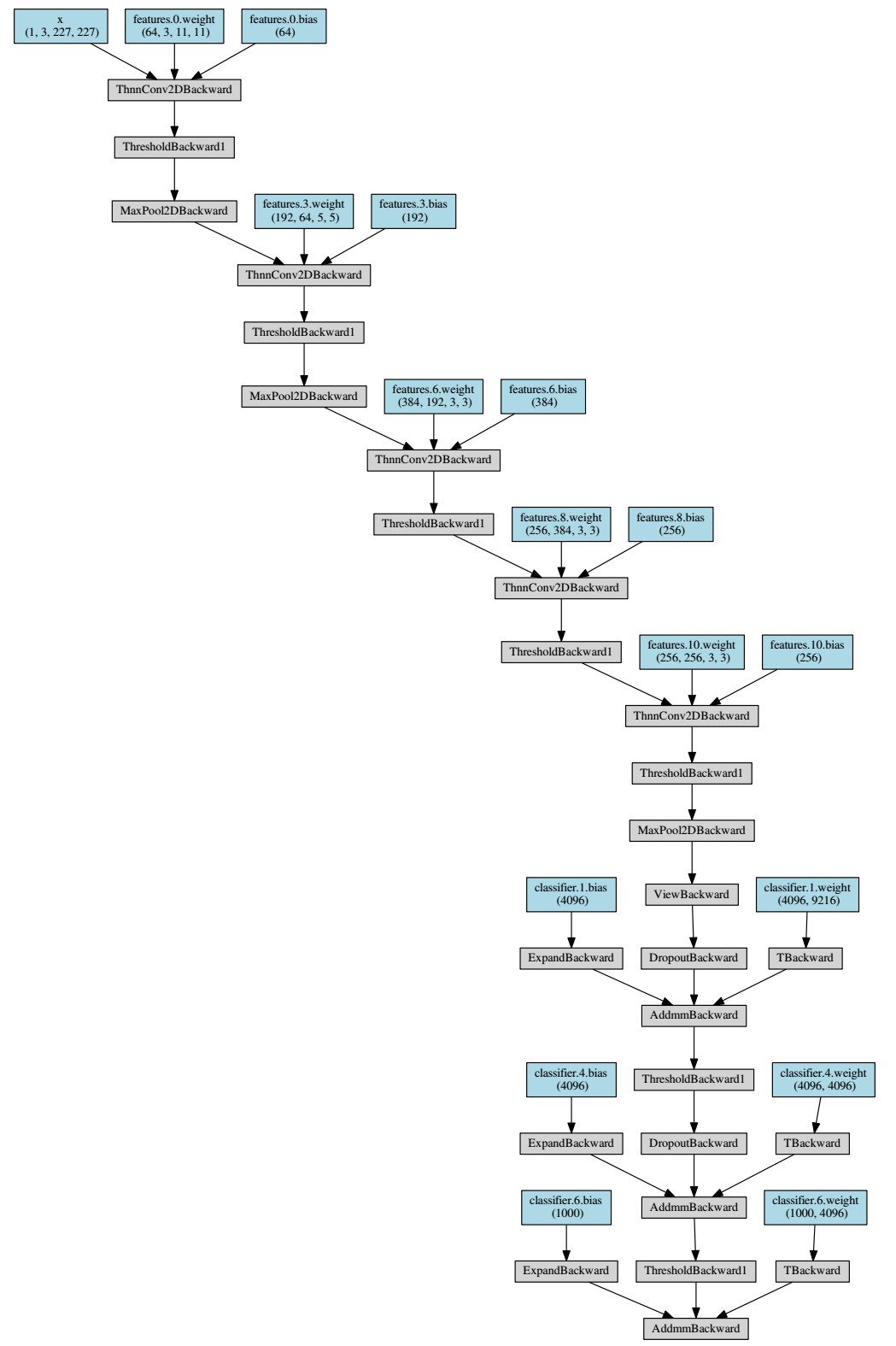

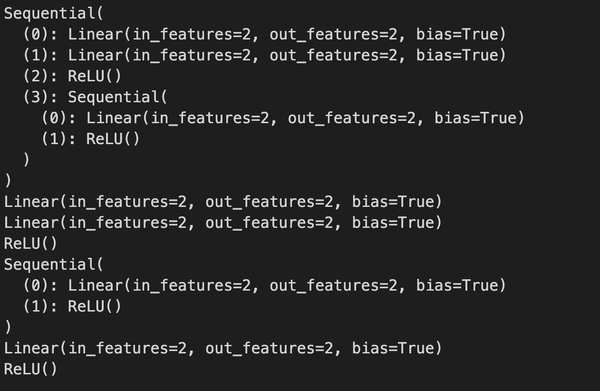

The NN seems to not capture the non-linearity of $cos(x)$ at the right end of the interval $$. The reason behind this is the asymmetry I found in the approximation of $cos(x)$ with this NN over $$. In the preparation of the training set we didn’t use $T=2\pi$ for the $cos(x)$ function, instead we used $3\pi$. nn as nn nn.Module nn.Sequential nn.Module Module: the main building block The Module is the main building block, it defines the base class for all neural network and you MUST subclass it. Torch ::save(y_model_sequence, "y_model_sequence.pt") Are there any computational efficiency differences between nn.functional () Vs nn.sequential () in PyTorch (1 answer) Closed 4 years ago. Torch ::mse_loss(validation_values, y_validation) The actual neural network architecture is then constructed on Lines 7-11 by first initializing a nn. VALIDATE THE MODEL WITH THE VALIDATION SET Validating the modelįor the validation, we use the last 30% of the randomly shuffled indices The following are 30 code examples of torch.nn.Sequential(). Consider making it a parameter or input, or detaching the gradient when tracing nn.Sequential with nn.Conv2d in torch 1.1.0 To Reproduce Steps to reproduce the. The accumulation (or sum) of all the gradients is calculated when. Bug RuntimeError: Cannot insert a Tensor that requires grad as a constant. Let’s define a Logistic Regression model object that takes one-dimensional tensor as input. We simply need to define a tensor for input and process it through the model. The gradient for this tensor will be accumulated into. The nn.Sequential package in PyTorch enables us to build logistic regression model just like we can build our linear regression models. This happens on subsequent backward passes. requires_grad as True, the package tracks all operations on it. When you create a tensor, if you set its attribute. Torch.Tensor is the central class of PyTorch.

PyTorch accumulates weight gradients of the network on subsequent backward propagations, so optimizer.zero_grad() is called to zero the gradients in order to ensure previous passes do not influence the direction of the gradient.

<< ", max(loss_values) = " << max_loss << endl Report the error with respect to y_training.ĭouble max_loss = loss_values.max().item () Loss_values = torch ::mse_loss(training_prediction, y_training) Ofstream conv_file ( "convergence_data.csv")

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed